Mary the Brilliant Computer Scientist

Can she put her brain in the state of understanding chess?

The following is a variation on the Knowledge Argument, of which the best formulation is probably the comic at the end of this post.1 Now, without further ado, meet:

The Brilliant Chess Engine Scientist Genius Scientist Mary

Mary is a brilliant computer scientist who, for whatever reason, grew up without ever encountering2 chess. In her work, however, she specialises in computer chess engines3 and she learns all the facts about them. Mary knows just how the billions of transistors are arranged in a computer such that they can execute the code that implements algorithmic searches through several hundred million chess positions per second, which in turn results in the moves that the chess engine selects.

Once Mary discovers that chess engines play chess, a game that many humans engage with, she realises she ought to be pretty good at this game. Mary is permitted to compete in the famous computer chess tournament TCEC, which she decisively wins.4 The best chess players in the world are, of course, very impressed by this. They invite Mary to learn from them how the best humans play and think about chess. Since Mary is very interested in the human mind too, she accepts their offer.

And so, the worlds best chess players teach Mary everything they know about chess. As it turns out, Mary is so talented that she can absorb all of this too. Based on her play (where she ignores what a chess engine would have done), and based on her way of talking about chess, they conclude that Mary truly understands the game of chess as well as anyone else.

Now, the question arises: did Mary learn something new? It seems just obvious that by learning from the best humans, Mary gains a new kind of understanding of top-level chess play, an understanding she can’t even begin to fathom by merely knowing all the facts about the goings-on in a mechanistic algorithm-executing chess-playing computer. Surely, that must be the case. Right?

Nooooooooo! That is not the case. As tempting a response as it may be (one I would previously have supported), but I think it is wholly wrong and mistaken. Yes, Mary learns a whole lot of new things, some of which may be very useful, but not the things that the “real understanding” folks purport. With respect to truly understanding chess excellence, Mary learns nothing new at all.5 In what follows, I will do my best to convince you that this is the case.

On understanding pins

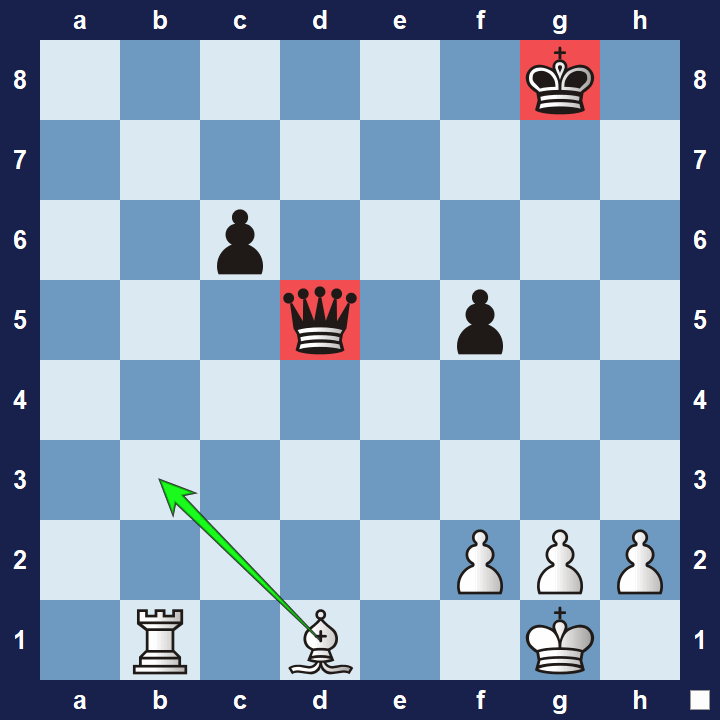

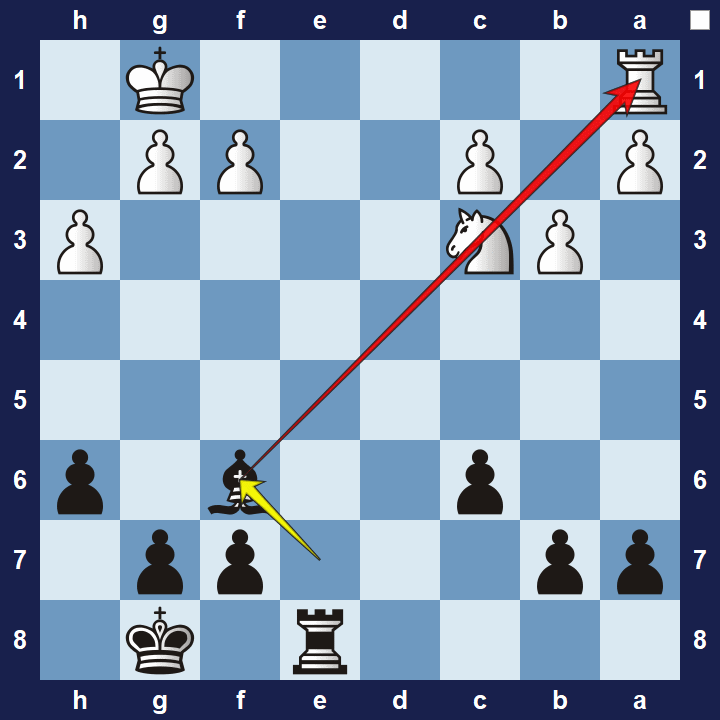

Let’s take a look at a relatively simple but hugely important concept in chess known as the pin. A pin is a tactic in which a defending piece cannot move out of an attacking piece’s line of attack without exposing a more valuable defending piece.

Learning all about and mastering pins is extremely important in chess. Did pre-release Mary, before learning human chess, know about the pin concept? Did she understand it?

Of course she did! Mary knows just how chess engines work, and chess engines are amazing at pin tactics. Pin tactics are no less important for chess engines than for humans! If in doubt, ask yourself this: is not the pin tactics excellence displayed by chess engines a fact about chess engines? Of course it is. It is one of the many, many facts that Mary knows.

Some object by pointing out that all Mary needs to know is the simple physical goings-on in the chess computer, and then she can figure out what the output will be. This is true, and further, it is true that she wouldn’t need to know about pin tactics in order to figure out the best move. But this is a terrible take. Here’s what also is true: such a “simpleton” Mary doesn’t even need to know that there’s a chess engine running on the computer! She doesn’t need to know jack shit about programming, she doesn’t even need to know what a computer is. That Mary hardly needs to know any of the physical facts about chess engines.

Our Mary, on the other hand, does know about chess engines, and she knows all the facts about them. So she knows about pin tactics.

But Surely, Mary learns something new about pins?

Mary does indeed learn something new about pins. In fact, she learns many things.

One thing Mary learns is that English speaking humans refer to pins as ‘pins’. If she attached English words in the process of learning about chess engines (perhaps to aid her memorisation), then it is not unlikely that she already referred to pins as “pins” (given the conceptual similarity to what actual pins do), but in any case, she didn’t know that humans referred to pins as “pins”. Now she has learned that new piece of information (AKA fact).

Here is another thing Mary learns: she learns just how and when the best chess players in the world fail to make use of pins as well as the best chess engines do. In learning this, Mary builds an internal model of how the humans think about pins,6 which in turn will make her a far better teacher for them than she could ever be without this knowledge.7

Mary may learn many, many things. But she doesn’t gain any further understanding about how pins work and how to best utilise them. And that is, of course, not only true for pins. This is true for all the short- and long-term tactical and strategic aspects of chess. Those are all facts, and she knows them all. Don’t let the fact that she learns new facts distract you from the fact that she doesn’t gain a deeper understanding of how to play!

Thank you all for listening (literally or figuratively). The rest of this post is a short Q&A, giving room for objections. Read it if you will. If your objection is not listed here, please post do post it in the comments!

The rest of this post (Q&A)

Mary doesn’t subjectively feel like she understands chess

That’s not a question, but never mind. Of course she feels like she understands chess! It should be fair to take the liberty to presume that Mary has a reasonably well tuned self-knowledge of what she understands and what she doesn’t understand, and furthermore, that she does not have an inflated or otherwise bad understanding of what it means to understand something.

But how Mary feels ultimately doesn’t matter. It is totally possible that she has been bullied into believing she doesn’t understand all sorts of things that she does indeed understand, and conversely, that she has an inflated view of herself, believing she understands things that she does not understand. People are wrong all the time about what they understand and what they don’t understand. That has no bearing whatsoever on whether Mary actually understands chess or not.

Why are you on about chess engine facts? The relevant analogy for the Knowledge Argument is, surely, chess player facts!

With my variant on the Knowledge Argument, I am trying to make the intuition that Mary gains new (non-trivial) knowledge less attractive, and that she gains a new (non-trivial) ability even less attractive. It is largely with this in mind that I chose chess engines rather than players. Using human players invites all sorts of inflated concepts of what real understanding and knowledge and ability is, even in many physicalists (myself included, when I’m not careful).

But feel free to swap the chess engine facts with chess player facts: if Mary knew all the facts about the best chess players, then she would not only be able to play as well as they do, but she would understand and talk about chess as well as they do. Yup, that’s the way it would go.

Hang on a minute… Surely, that does not follow? Knowing everything about chess players does not imply that Mary can put her brain in the state of understanding chess the same way that they do?

Yes, it does. It doesn’t enable her to put her brain in exactly the same brain state as they are in, but that would be a terrible idea, since that would entail forgetting who she is. Lucky for Mary, understanding chess the way they understand chess does not require putting herself in any particular global brain state, nor does understanding chess in a particular way come with any such requirements, except in the following sense: What is required is that Mary puts her brain in the state state of recalling the facts which constitute understanding chess in whichever way we’re talking about. Such facts can be encoded and recalled in all sorts of ways in brains. If Mary recalls those facts, then she is, by definition, putting her brain in the required brains state.

No! Mary would feel like she’s effortfully and consciously calculating what some other person would do and say. That is not what knowing chess is!

It does not matter if Mary consciously and effortfully must figure out the answer to the question “what would Magnus have done?”,8 even for the very first move in the game. That has no bearing on whether her brain is in the state of understanding a chess position or not. If Mary knows all the facts about these chess prodigies, I think it’s fair to presume she sooner or later knows the good opening moves by heart, but in any case, this is a distraction from the real issue:

Even if Mary has a subjective experience of effortfully calculating, she does know how to open the game, and she does know how to talk about why she opens the game like that, and further, and there is nothing more to it.

I think it is probably worth repeating that if Mary has a non-inflated understanding of “understanding”, then she also feels like she understands what she’s doing, including what she’s saying. (I also think it’s fair to assume that all of this becomes second nature to her after a while, but the important conclusions do not hinge on whether that’s true or not.).

Further, it is very tempting to some to imagine that Mary would say something like the following; “I know Magnus would do this move, which he would motivate with this explanation, but I, Mary have no idea why or what that means!”. But Mary should not say any such thing. If she says that, she either has incomplete knowledge of the facts about Magnus (direct & indirect knowledge), or she has low self-esteem. And such circumstances can make any person say all sorts crazy things. Let’s not go there.

But if chess Mary might feel like she’s effortfully calculating, then will not Colour scientist Mary feel the same when thinking about red? Surely that is not what experiencing red is like?

I agree with Pete Mandik that knowing what seeing red is like does not depend on being able to mentally visualise red. But I disagree with Pete Mandik who claims (if I’m not mistaken, or he hasn’t changed his mind) that Mary does not necessarily have the ability to visualise red. As long as Mary does not suffer from any sort of memory deficit, mental blockage, is having a bad day, or has smoked too much weed (which according to the scientific literature apparently can result in some “blockage” of which I have no first-person subjective experience memories) which causes tip-of-the-tongue or tip-of-the-brain-phenomena, then Mary can do the following: Mary can recall all the facts she knows about seeing red, and she can do so at will, without the aid of any red stimuli nearby, and furthermore, she can believe that she can recall all those facts about what seeing red is like. What did she just do? Guess what. She visualised red, and she had the experience of visualising red.9 (I am sincerely very curious as to why Pete objects to Mary being able to visualise red, but he’s a bit secretive. Maybe it mostly comes down to his gripe with equating mental imagery with knowing what seeing red is like?)

But none of that is, of course, a response to the objection at hand (sorry for getting derailed). In my response, I will equate knowing what red is like to performing mental visualisation of red, for a few reasons, one of which is that it sidesteps the debate of whether knowing what red looks like requires being able to visualise it. If Mary can visualise red (which she can), then I hope we all agree that she also knows what it looks like.

So, is it possible to engage in such an effortful and conscious calculating and recalling third person facts or second order facts (or whatever you want to call them) about colour vision processing neurons and linguistic activation patterns and whatnot in some other “virtual” persons brain, all-the-while feeling extremely mentally strained by this bizarrely complex calculation, and as a result of all this experience mental imagery of red? Yes it is possible, and moreover: if Mary does so, without being in denial about what she is doing, then it is unavoidable that she will do so, and experience that she is doing so.10

Whether or not Mary experiences mental strain and conscious calculation does matter for the global brain state, but it does not matter for whether she’s in the state of experiencing red imagery or not. Furthermore, whether or not Mary employs her visual cortex to recall the facts about what red looks like may matters for the similarities of visual cortex activations between her and a “normal” person engaging in mental imagery of red, but it does not matter for whether she’s experiencing red imagery or not, and absolutely not for what-it-is-like to experience said imagery. When Mary recalls(/figures out) what red looks like to normal colour seers, then Mary has, just like chess Mary, successfully put her brain in the required state of engaging in visual imagery of red, and that red has all the redness you could ever wish for.

Do chess engines understand chess?

Oh, I’m glad you asked. Yes, they do. They understand chess far better than any human. What they don’t understand are things like human-specific weaknesses , how to teach chess to humans (tutoring, writing books, making youtube videos), and lots of other chess-related facts. But they sure as hell understand chess.

Seeing red does not involve knowing facts

Yes Mark, it does. Mary can learn the ability to recall those facts long before she ever learns them from seeing colour.

Do you have aphantasia?

Nope.

Pete’s Hail Mary and the discussions the followed him made me change my view on the Knowledge Argument. While I saw myself as a Dennettian prior to this, I now think I was stuck in some Cartesian Materialist residue (Cartesian Materialism is basically when Cartesian dualism or Cartesian Theater intuitions masquerade as materialism, often in spite of the subject denying this).

In this post, I am advocating a deflationary view on knowledge and understanding, which by extension is a deflationary view on what both experiencing colour and imagining colour is. My hope is that this will help one or two physicalists let go of the ability hypothesis, which I am (like Pete Mandik) growing increasingly negative towards. I could be wrong that Cartesian Theater-intuitions are behind the defenders of the ability hypothesis and other opposers to Dennett’s response, but that is my honest hunch, speaking as someone who is himself stuck in such intuitions most of the day. In any case, I tentatively and very humbly suspect that aforementioned intuitions to some degree take a grip of Disagreeable Me (see this appearance on the excellent show The Persuaders, this post, and earlier, this), Zinbiel, and possibly also Mark Brewer and Flo Bacus (here) and Sean Carroll. In any case, whether my analysis of these people is accurate or not, I do suspect it applies to a faeces-load of physicalists and physicalist-adjacent people.

She’s never played it, heard about it, thought about it. No chess.

A chess engine is just the preferred term for a chess-playing computer program. Notably, Mary may learn all about chess engines without even realising chess is something humans play!

Mary’s mind is not infinitely fast, but she can figure out (or recall) what a chess engine would do faster than the chess engine can, given hardware constraints

Until relatively recently, it was widely recognised that humans had a superior understanding of at least some fringe aspects of chess positions when compared to engines, illustrated by the fact that a human and a chess engine in cooperation was stronger than a chess engine alone. To the best of my knowledge, this is no longer the case.

Please note that Mary can learn this merely by watching them play. She does not need to talk to them or know anything else about them. Another possibly noteworthy fact is that she can learn all this without the slightest clue about the neuroscience of chess play.

She would be a better teacher because she understands their weaknesses better, not because she understands optimal chess play better

I’m referencing my fellow Swede, the world’s best chess player Magnus Carlsen (although technically he’s Norwegian and Norway is a “free” country).

I know many of you will object to this. Sorry, I’m just telling you the way it is.

Mary can go different routes to learn everything about what red looks like. She can extrapolate from the neuronal activations in the “virtual” brain (so to speak) just how red objects grab attention, raise the heart rate (without knowing anything about hearts), brings about associations to fire, ripe fruit, and so forth. She can extrapolate the strong contrast between red and grey that people typically experience. She can extrapolate all the sorts of things that humans say in reaction to red colour stimuli, including all the vivid descriptions of seeing red, including poems of red roses, and so forth. It doesn’t matter if Mary is blind from birth— if she’s able to remember or on-the-fly figure out all those facts, she knows what red looks like, and she can visualise it. But she could also, if she doesn’t care about science, just learn all the facts about what red things looks like. She reading about peoples experiences of red things, learning about colour optics, and just hanging out with colour seers and hear them talk about colour. It might take a while, but so what.

I'm not convinced this analogises to Mary in a useful way.

A lot of it depends on how you define a fact. From my own interpretation of this word, there are vanishingly few chess "facts". Just the rules. The rest is clever rearrangement and deduction. Fixing the rules facts fixes all other purported chess "facts" (which are therefore not new facts, just corollaries), so this sort of analogy cannot begin to intersect with qualia, where it is believed (by some, not me) that fixing one set of facts (physical ones) fails to fix another set (phenomenal ones).

I don't see anyone arguing that low-level chess facts fail to fix high-level chess facts. It's not conceivable that there could be a failure of entailment.

Furthermore, the original KA explicitly asks us to overlook quantitative limitations on Mary's cognition. The issue at hand is the sort of cognitive tools at her disposal, and red-visualisation tools are very different to neural-circuit-analysing tools, which is why she can learn something.

But neural-circuit-analysing tools and chess-position-analysing tools are in the same toolbox; they are essentially the same tools, involving the very same sort of cognitive operations.

As soon as we wave our magic thought-experiment wand and give Mary unlimited analytical cognition, she only needs the rules of chess, and nothing else, to beat every human and chess computer that will ever exist. She is therefore incapable of learning anything new about the game-logic and strategies of chess, because she already knows it all. She can only learn about the cultural and linguistic embedding of those rules.

> But I disagree with Pete Mandik who claims (if I’m not mistaken, or he hasn’t changed his mind) that Mary does not necessarily have the ability to visualise red.

Can I clarify what you mean by necessarily here? Is it a metaphysical claim that being in a particular brain state necessarily (in all possible worlds) leads to the experience of visualising 'red'.

Because if this is the claim I think it conflicts with the chess analogy.

If Mary understands all the "micro" components of chess knowledge I can see how this necessarily leads to macro knowledge about concepts such as a pin/fork etc.. but I don't think she necessarily represents them in a particular way.

For example, she could represent them in her mind with little pictures of knights, bishops, queens etc.. or maybe with words saying 'knight', 'bishop', 'queen'. Or maybe she has a different representation entirely. It doesn't follow that she necessarily represents the concept "pin" in a particular way just because she's gained all the microphysical details.